I show here a way to use some new C++ features (coroutines and generators) to improve performance on composing sparse linear algebra algorithms by making it possible to share data between consecutive steps. I do this by replacing the more standard “callback-style” interface for sparse linear algebra algorithms with “reverse communication” which was once a common interface style for Fortran libraries (and still is e.g. for ARPACK). Reverse communication has always been possible in C++ but language features did not really make it a welcoming strategy. The introduction of coroutines (C++20) and later std::generator (C++23) considerably cleans up reverse communication and gives a uniform interface to it for all C++ programmers. I give first some context behind reverse communication, why it existed, and how callbacks later replaced it.

Reverse communication was devised for sparse linear algebra algorithms because of the following contention:

and especially in the early days of Fortran library implementation where it was very common to specify all interfaces in highly concrete terms strictly with only “plain old data” and arrays this posed a significant challenge to support all the possible sparse matrix formats a user might want to use. For example (Dongarra, Eijkhout, & Kahan, 1995) makes the case for reverse communication:

In this report we describe a reverse communication interface for the software implementing the iterative methods described in the Templates book [2]. Reverse communication is a technique by which we can hide the implementation details of various operations from the implementation of the iterative method. This allows us to (a) remove references to the user-prepared array or data structure containing the matrix within the iterative solver, and (b) uniformly take care of the various components that can be changed by a user. These include implementation details of matrix-vector operations, vector operations, stopping tests, and norm computations.”

or in (Lehoucq,Sorensen,Yang)

“ARPACK uses the reverse communication interface to make it easy for a user to work with any sparse matrix format or provide a customized sparse matrix vector multiplication subroutine. When a matrix operation is required it returns control to the calling program with a flag indicating what operation is required. The calling program must then perform the operation and call the ARPACK routine again to continue. The operations are typically matrix-vector products, and solving linear systems.”

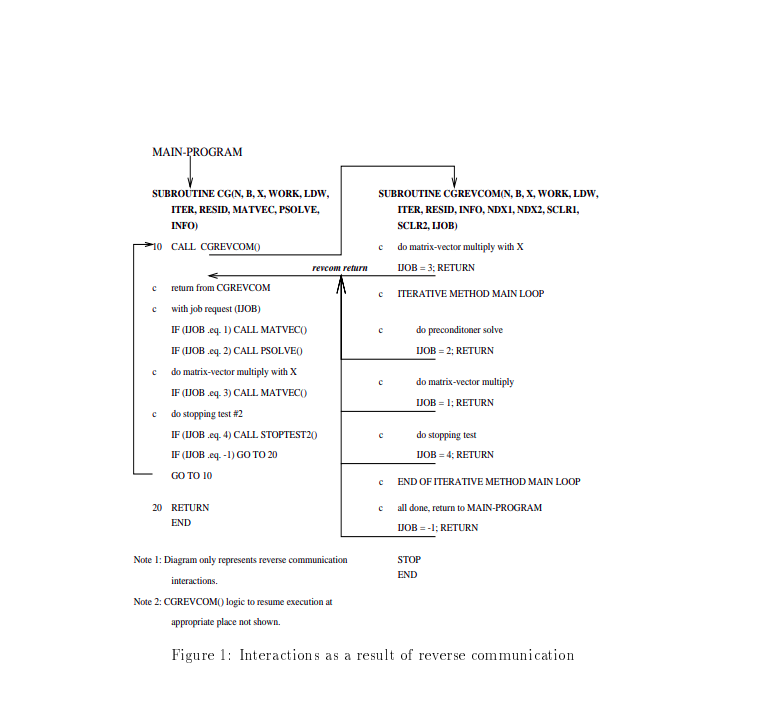

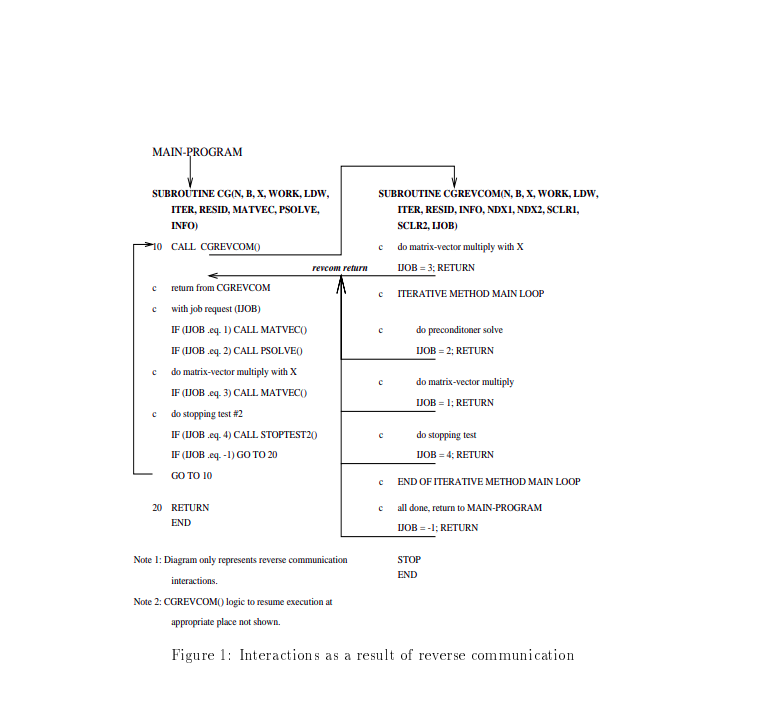

the implementation of reverse communication usually worked as follows:

while loop repeatedly calling the main algorithm that uses reverse communicationthe diagram from (Dongarra, Eijkhout, & Kahan, 1995) illustrates this flow:

We will see shortly that this is very similar to “coroutines” which have very recently achieved official support in standard C++, but before discussing that I will show how the world kind of moved on from reverse communication to callbacks

The reason for the somewhat complex calling sequence just to abstract over matrices in Fortran was that early Fortran did not support functions as a first-class object, you could not pass functions or even pointers to functions as arguments. Most languages that followed Fortran (including more advanced standards of Fortran itself) did allow this passing around of functions. This callback-style became the far more prevalent way to design linear algebra interfaces because it was vastly simpler on the caller and has no apparent performance deficiency compared to reverse communication (it has performance-parity).

That last remark on performance-parity kind of assumes that all these algorithms run in a vacuum. But actually they often run in parallel with each other and feed into one another as well - a complex sequence of linear algebra algorithms may be called in sequence used as building blocks to build up ever-more sophisticated algorithms. I have found that in this context the callback approach inadvertently introduces a major inefficiency because all of these separate algorithms don’t know what the other is doing it requires multiple redundant evaluations of matrix-vector products (each requiring a full scan of the sparse matrix). Thus the reverse communication approach by returning control back to the caller affords the caller the opportunity to batch together multiple operations requiring the same data - usually their sparse matrix. This results in considerable savings on memory reads.

Reverse communication has always been possible in C++, but coroutines make their implementation considerably easier and furthermore expose a universal interface that requires less specialized documentation for an experienced C++ to understand. I will show next how to implement the conjugate gradient algorithm using both callback style and reverse-communication style with coroutines.

Conjugate gradients is a nice algorithm for illustration because it is very easy to implement and the primary computational cost is found in the evaluation of matrix-vector products (and the preconditioner if you are using one, but just for simplicity I do not use them here).

A classical implementation of conjugate gradients in C++ will use a callback for the matrix-vector product like below. Look for uses of the input lambda A.

template<typename T,typename Lambda>

std::vector<T> cg_plain(size_t nrows,Lambda A, std::span<const T> b,size_t maxiter){

bool verbose = false;

auto dot = [](std::span<const T> x, std::span<const T> y) -> T{

assert(x.size() == y.size());

T out = 0.0;

for(size_t i=0;i<x.size();i++){

out += x[i]*y[i];

}

return out;

};

std::vector<T> x(nrows,0.0);

std::vector<T> r(nrows,0.0);

std::vector<T> p(nrows,0.0);

std::vector<T> q(nrows,0.0);

/*Calculate initial residual.*/

A(x,r);

for(size_t i=0;i<r.size();i++){

r[i] = b[i] - r[i];

}

std::copy(r.begin(),r.end(),p.begin());

T beta = 0.0;

T rho_last = 0.0;

T rho_current = 0.0;

for(size_t it=0;it<maxiter;it++){

rho_current = dot(r,r);

if(verbose){

std::print("iteration: {}, residual: {}\n",it,std::sqrt(rho_current));

}

if(it>0){

beta = rho_current/rho_last;

for(size_t i=0;i<p.size();i++){

p[i] = r[i] + beta*p[i];

}

}

A(p,q);

T alpha = rho_current / dot(p,q);

/*Update.*/

for(size_t i=0;i<x.size();i++){

x[i] = x[i] + alpha*p[i];

r[i] = r[i] - alpha*q[i];

}

rho_last = rho_current;

}

return x;

}

In this way we have an independently testable algorithm such that the user can use any sparse matrix format they may want so long as they can expose it to the algorithm in the form of a callback. The calling sequence to use this is very simple I show a snippet for this below because we will later contrast it with reverse communication:

auto x = cg_plain(nrows,

[&A](std::span<const double> in, std::span<double> out){

/*A is a sparse matrix using CSR format, but algorithm only need `matvec`.*/

A.matvec(in,out);

},std::span<const double>(b),maxiter);

To implement reverse communication I use coroutines and generators. Instead of status flags as was used in Fortran reverse communication I use a std::variant. This requires a little extra setup but it’s surprisingly similar to the callback-style implementation thanks to elegant co_yield now supported by C++:

/* These are deferred operations that will be co_yielded from our reverse communication CG.*/

/* Deferred operation for a matrix-vector product.*/

template<typename T>

struct deferred_matvec{

std::span<const T> in;

std::span<T> out;

};

/*An indicator that the algorithm is complete, storing its result.*/

template<typename T>

struct done{

T result;

};

/* Note how the signature no longer includes _any_ reference to an input matrix, not even as a callback - it's now the users' responsibility! .*/

template<typename T>

std::generator< std::variant<deferred_matvec<T>,done<std::vector<T>>> > cg_revcomm(size_t nrows,std::span<const T> b,size_t maxiter){

bool verbose = false;

auto dot = [](std::span<const T> x, std::span<const T> y) -> T{

assert(x.size() == y.size());

T out = 0.0;

for(size_t i=0;i<x.size();i++){

out += x[i]*y[i];

}

return out;

};

std::vector<T> x(nrows,0.0);

std::vector<T> r(nrows,0.0);

std::vector<T> p(nrows,0.0);

std::vector<T> q(nrows,0.0);

/*Calculate initial residual.*/

co_yield deferred_matvec<T> { .in = x, .out = r };

for(size_t i=0;i<r.size();i++){

r[i] = b[i] - r[i];

}

std::copy(r.begin(),r.end(),p.begin());

T beta = 0.0;

T rho_last = 0.0;

T rho_current = 0.0;

for(size_t it=0;it<maxiter;it++){

rho_current = dot(r,r);

if(verbose){

std::print("iteration: {}, residual: {}\n",it,std::sqrt(rho_current));

}

if(it>0){

beta = rho_current/rho_last;

for(size_t i=0;i<p.size();i++){

p[i] = r[i] + beta*p[i];

}

}

co_yield deferred_matvec<T> { .in = p, .out = q };

T alpha = rho_current / dot(p,q);

/*Update.*/

for(size_t i=0;i<x.size();i++){

x[i] = x[i] + alpha*p[i];

r[i] = r[i] - alpha*q[i];

}

rho_last = rho_current;

}

co_yield done<std::vector<T>> { .result = x };

}

What has effectively happened here is that wherever a callback would have been called we just replaced it with a deferred evaluation and co_yield it back to the caller. Each co_yield returns a variant which either is deferred_matvec or done. The new calling sequence is more complicated just like in the Fortran case:

//Holds the output

std::vector<double> x;

//Elegant use of `std::generator` allows us to iterate it to completion as a range loop

for(auto deferred_op : cg_revcomm(nrows, std::span<const double>(b), maxiter)){

//Each `co_yield` from cg_revcomm returns a variant which is either a matvec or a done,

//thus we need `std::visit` to unpack the two possibilities

std::visit([&](auto&& arg){

using DT = std::decay_t<decltype(arg)>;

/*CG has requested ("reverse-communicated") a sparse matrix-vector product

* so now we evaluate that here.*/

if constexpr (std::is_same_v<DT, deferred_matvec<double>>){

auto [in, out] = arg;

A.matvec(in,out);

}

/*Algorithm has produced an answer which we capture.*/

else{

auto [tmp_x] = arg;

x = tmp_x;

}

}, deferred_op);

}

But this establishes that is is indeed possible to use coroutines to implement reverse communication, at the cost of a more complex calling sequence. I will show next however how we can use this to our advantage for performance.

I give a small contrived example (for simplicity) to illustrate that reverse communication enables potential data sharing between algorithms, saving on memory bandwidth and speeding up the algorithm:

Suppose we don’t just want to solve a sparse linear system but we also want the spectral radius (largest absolute eigenvalue) of the matrix? There’s another algorithm that also only requires matrix-vector products for the spectral radius called power iteration. We could run conjugate gradients in parallel with the power iteration but this effectively doubles up memory reads over the input matrix A because each evaluation of matvec requires a full scan of A’s datastructure. If we stick to the callback-style approach we’re kind of stuck with this inefficiency unless we custom-write a combined algorithm that can efficiently share A’s data. I think it should be obvious why we should prefer not to do this every time we want to compose linear algebra algorithms, so now I show how to avoid that with reverse communication.

I will omit power iteration implementation as it has almost exactly the same structure as conjugate-gradients above. The reverse-communication signature however looks as follows:

template<typename T>

std::generator< std::variant<deferred_matvec<T>, done<std::pair<T,std::vector<T>>> > >

power_revcomm(size_t nrows,std::span<const T> x,size_t maxiter);

Now we can co-iterate both of these algorithms in tandem and use a batched matrix-vector product whenever both algorithms call for it, effectively cutting the number of reads over A in half:

/**

* The idea here is instead of simply sequentially iterating both coroutines

* to completion we can co-iterate them and batch up any matching

* matrix-vector products involving `A`, greatly reducing

* the redundant memory reads while still keeping

* the algorithms logically separate.

*/

auto cg_eval = cg_revcomm(nrows, std::span<const double>(b),maxiter);

auto power_eval = power_revcomm(nrows, std::span<const double>(b),maxiter);

auto cg_it = cg_eval.begin();

auto power_it = power_eval.begin();

std::vector<double> x;

double lambda;

std::vector<double> y;

while(cg_it != cg_eval.end() && power_it != power_eval.end()){

auto cg_op = *cg_it;

auto power_op = *power_it;

bool cg_is_matvec = std::visit([&](auto&& arg) -> bool{

using DT = std::decay_t<decltype(arg)>;

if constexpr (std::is_same_v<DT, deferred_matvec<double>>){

return true;

}

return false;

},cg_op);

bool power_is_matvec = std::visit([&](auto&& arg) -> bool{

using DT = std::decay_t<decltype(arg)>;

if constexpr (std::is_same_v<DT, deferred_matvec<double>>){

return true;

}

return false;

},power_op);

/*We have four posibilities:

* Either both CG and power iteration have requested a

* matrix-vector product,one of them is done,

* or both of them are done.

*

* But in all other cases we just handle them with the

* same visitor pattern as before.

* */

if(cg_is_matvec && power_is_matvec){

/*In this case since both algorithms want matrix-vector product

* with the same matrix `A` we batch them up.*/

auto [in0,out0] = std::get<deferred_matvec<double>>(cg_op);

auto [in1,out1] = std::get<deferred_matvec<double>>(power_op);

A.matvec_batch2(in0,in1,out0,out1);

}

else{

std::visit([&](auto&& arg){

using DT = std::decay_t<decltype(arg)>;

/*Power iteration has requested ("reverse-communicated") a sparse matrix-vector product

* so now we evaluate that here.*/

if constexpr (std::is_same_v<DT, deferred_matvec<double>>){

auto [in, out] = arg;

A.matvec(in,out);

}

/*Algorithm has produced an answer which we capture.*/

else{

auto [result] = arg;

auto [tmp_lambda,tmp_y] = result;

y = tmp_y;

lambda = tmp_lambda;

}

}, power_op);

std::visit([&](auto&& arg){

using DT = std::decay_t<decltype(arg)>;

/*CG has requested ("reverse-communicated") a sparse matrix-vector product

* so now we evaluate that here.*/

if constexpr (std::is_same_v<DT, deferred_matvec<double>>){

auto [in, out] = arg;

A.matvec(in,out);

}

/*Algorithm has produced an answer which we capture.*/

else{

auto [tmp_x] = arg;

x = tmp_x;

}

}, cg_op);

}

cg_it++;

power_it++;

}

//Must clean-up either CG or power may not have finished but I leave as

//exercise to the interested reader

that’s a lot of code dealing with variants which I think can be cleaned up a lot, but as a proof of concept it at least shows this works. The key lines are the following where we call a batched matvec

/*We have four posibilities:

* Either both CG and power iteration have requested a

* matrix-vector product,one of them is done,

* or both of them are done.

*

* But in all other cases we just handle them with the

* same visitor pattern as before.

* */

if(cg_is_matvec && power_is_matvec){

/*In this case since both algorithms want matrix-vector product

* with the same matrix `A` we batch them up.*/

auto [in0,out0] = std::get<deferred_matvec<double>>(cg_op);

auto [in1,out1] = std::get<deferred_matvec<double>>(power_op);

A.matvec_batch2(in0,in1,out0,out1);

}

My performance results have been pretty interesting, which I discuss next.

I set up an example where I randomly generate a symmetric positive definite sparse matrix using the CSR format with 200000 rows, 30 nonzeros per row, and a graph-bandwidth of about 30. I then tested the following implementation strategies:

In general I expected that the batching versions should outperform non-batching versions, and that is indeed the case:

| Algorithm Type | Time (milliseconds) |

|---|---|

| Callback Sequential | 3766 |

| Reverse Communication Sequential | 3763 |

| Batched Reverse Communication | 3026 |

| Callback Multithreaded | 1837 |

| Batched Reverse Communication Multithreaded | 1618 |

When optimization is turned on (in this case I just used -O3) there is no apparent overheads of the std::generator compared to the callback approach, and the batched versions are a good bit faster.

This was an experiment to see if C++ coroutines might offer a new approach to interfaces for linear algebra algorithms. The idea here is to allow for the possibility that multiple algorithms could share data while still implementing those algorithms in logically self-contained functions. C++ coroutines have very cleanly enabled this pattern. The batching concept I introduced also shows good performance benefit over the non-batched algorithms, so there is potential performance benefit to taking this approach over callbacks because batching in this way just can not be easily implemented with functions using callbacks.

The main drawback here was the added code complexity on the callers. So I think work is needed to simplify this new pattern. Perhaps linear algebra interfaces opting to use reverse communication should have a level-0 interface that exposes reverse communication and a level-1 interface that uses callbacks (but just uses the reverse communication implementation under-the-hood). Most of the time, especially in algorithm prototyping phases, the user will prefer the simpler callback interface, but in later optimizing stages they will want to seek opportunities for data sharing and reverse communication could offer a real possibility there.

You can find the (proof of concept) code for my results here